Key Takeaways

- For many applications, resistance measurements are straight forward, but this is not always the case.

- Low resistance measurements require special tool and techniques to get accurate measurements.

- There are test methods to capture the impact of temperature on resistance.

The measurement of resistance seems to be a trivial task. Hook up a standard handheld multimeter with two probes and attach the probes to either side of the device or component that you want to measure. For the most part, this process works well. More correctly, for devices with resistances between 2 Ohms and 1M Ohm, this is a good technique, however, once the resistance values get really high or really low, the process becomes a little more complicated. While the basic physics remains the same (Voltage = Current * Resistance), getting the setup to correctly measure the component requires attention to detail.

Where do handheld units fail?

The issue with most handheld meters is that the level of accuracy in the low range (0 – 100 Ohms) is limited to ±0.1 Ohms. This creates a measurement threshold for a lot of applications. If a conductor resistance measurement is performed on 3,000 feet of wire, the accuracy has a minimal impact. For example, 3,000 ft of 20AWG wire yields about 29.6 Ohms (maximum allowable resistance for 20AWG AS22759/18 wire). With an accuracy of ±0.1 Ohms, the uncertainty of resistance per foot is ±30 µOhms. Compare this to the measurement of 10 ft of 20AWG of the same specification; a handheld meter would measure about 0.1 Ohms, with the uncertainty of resistance of the 10 ft sample being ±0.1 Ohms. It is usually not preferable to have the measurement uncertainty to be equal to the measurement itself.

The handheld multimeter does tell you the continuity of the circuit for short wire lengths, it does not do a good job with providing an accurate measurement. Other techniques have to be employed for an accurate reading.

Low Resistance Measurements

One problem with any low resistance measurements is surface resistance. Many metal surfaces will develop an oxidization layer. This oxidization layer creates a high surface resistance; you may experience this when measuring a sheet of metal only to find a higher than expected resistance. The way to handle this is with a little bit of surface preparation. This can be done with cleaning or a little surface abrasion to break through the oxidization layer.

Making the Measurement

If using a two-point probe (such as what is commonly used with handheld multimeters), then it is necessary to also account for the probe resistance and the resistance wire to the measurement device. The best way to do this is connect the two probes together and record the resistance. This resistance is then subtracted from the sample resistance measurement.

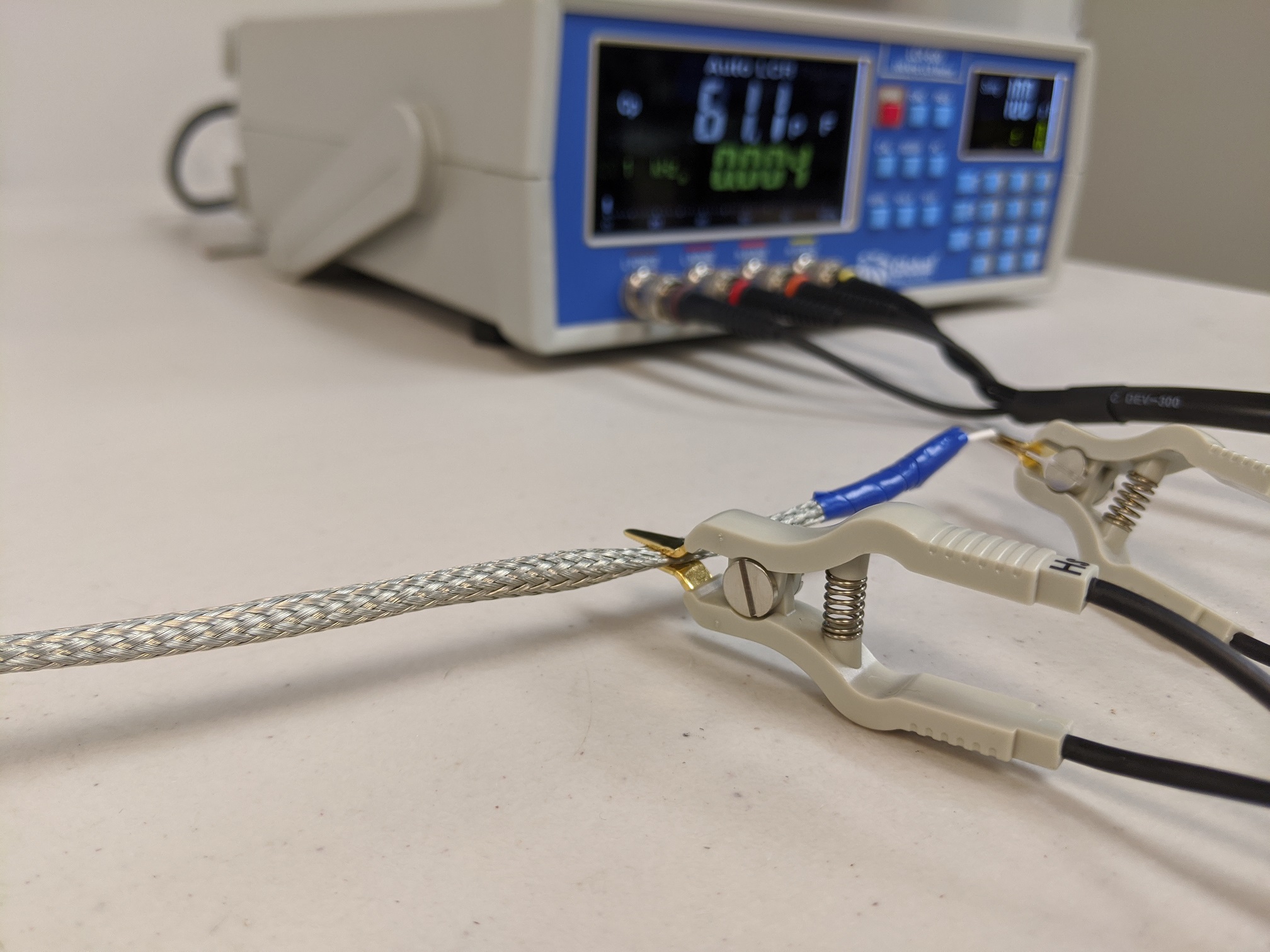

When dealing with low resistance measurements, a four-point probe is preferred. A four-point probe is a special device based on a Kelvin bridge design. The basic idea is that both the current and voltage measurements are made to provide a high level of resistance measurement accuracy.

ASTM B193

There are many standards that cover the testing of conductors; the discussion here focuses on the ASTM B193. This standard, first released in 1987, is designed to handle the generalized means of test methods to address the wide needs of the wire/cable industry.

There are a couple elements of this test that are unique to testing conductors. The dimensional size of the cable under evaluation must be evaluated. This includes measurement of the specimen’s weight.

For testing, the sample is prepared by striping the insulation from both ends of the wire or cable. Depending on the sample, there are a couple means of test measurement:

Option #1: Attach the sample to the resistance measurement device and record the result. This is possible if the sample’s resistance is high or the equipment has a high accuracy.

Option #2: If the first method is not viable, then an alternative method must be employed. The sample is attached to a current controlled DC power supply. The current through the sample is limited, and both the current and the voltage across the sample is recorded. While this method still employs ohms law, the use of a higher voltage and current allows for a better measurement of the sample.

With this method, it is important that the current remain at less than 10% of the wire’s rating. Doing so avoids conductor heating that will alter the resistance measurement.

Temperature Adjustment

In the ASTM B193 standard, a formula is provided to account for measurement at different temperatures. This formula is based on known properties of the conductor and its resistance response to different temperatures. For those materials that do not have a well-defined temperature relationship, there are methods to capture this parameter.

A test method has been developed by the SAE 8D thermal modeling task group; while it is not part of the current revision, the test method should be part of the AS4373 test standard in the next revision. In this test method, the wire is submerged into a temperature-controlled fluid. The fluid is used to ensure the wire is at thermal equilibrium when any resistance measurement is made.

Progressively, the temperature is increased, and the resistance measurements are made at several temperatures. From these measurements, a best fit curve is created so that the conductor resistance may be calculated at different temperatures.

Conclusion

Whether the test is performed in accordance with ASTM B193, ASTM D3032, or any other test method, testing the conductor resistance is the application of voltage and current to capture a conductor’s resistance value.

If you are looking for a lab to help you with you cable assessment needs, contact Lectromec. We have you covered.